Before You Repost: A University Student's Guide to Verifying Viral Clips During Crises

This educational article is published in collaboration between the Arabi Facts Hub (AFH) and Al-Fanar Media

Imagine you are scrolling through your phone between lectures and you come across a video showing bombing or violent protests, with a caption that reads: "Happening now." Within minutes, your classmates have shared it on study groups or their personal accounts, and perhaps you did the same, without asking: Is this video current? And was it actually filmed in the place it claims to document?

During times of crisis—such as wars, elections, or protests—video becomes one of the most widespread and influential types of content on social media. We often treat what we see in a video as direct evidence of what is happening because images and scenes appear inherently "real."

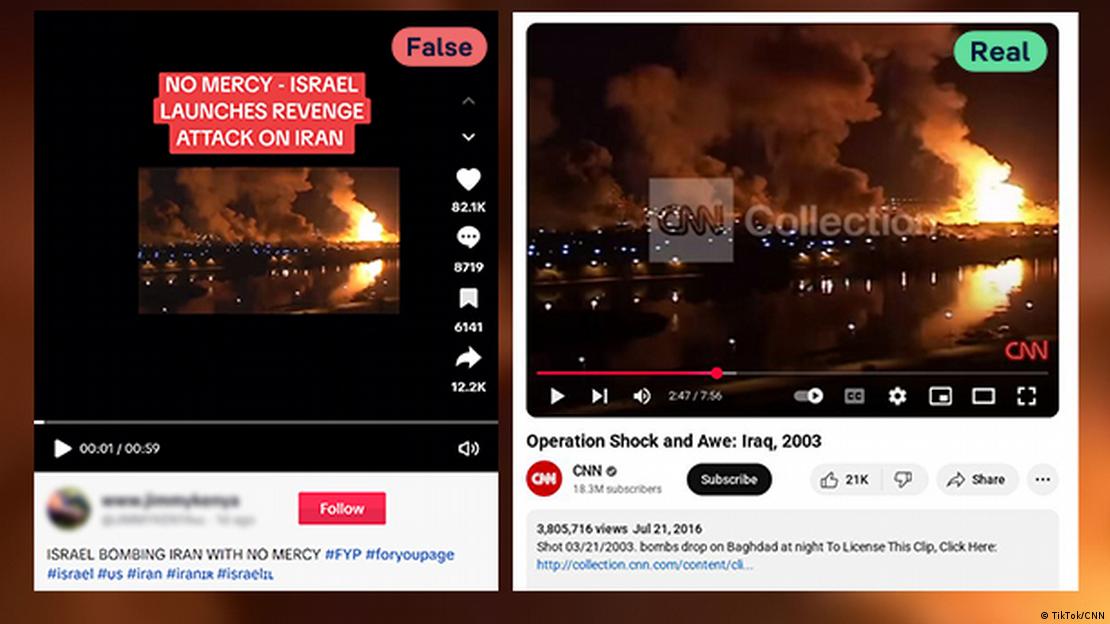

However, the problem is that many videos circulated during crises are not technically fake, but rather real footage that has been taken out of its temporal or spatial context, and then re-shared as documenting current events. In this case, the content publisher does not need to manipulate the video itself; merely changing the title or the time of publication is enough to make it misleading.

This article provides a practical guide for university students on how to verify videos before resharing them, using simple steps based on Open-Source Intelligence (OSINT) methodologies adopted in newsrooms and fact-checking platforms worldwide.

Why Do Misleading Videos Spread Quickly During Crises?

Social media platforms rely on algorithms that prioritize content that elicits engagement, such as fear, anger, or shock. During crises, users tend to share videos that contain dramatic or shocking scenes, even before verifying their authenticity.

For this reason, an old video or one unrelated to the current event can spread within hours and achieve millions of views, simply because it appears relevant to what is happening now. As it repeatedly appears across different accounts, the video gains greater credibility, even if it is not credible.

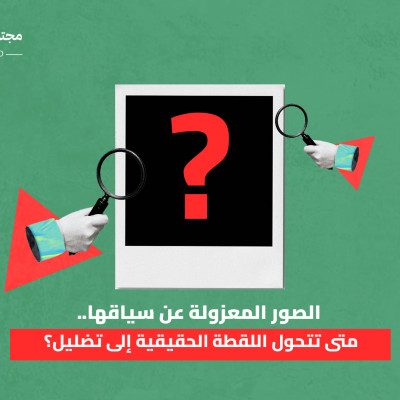

How Can a Real Video Mislead Us?

Disinformation does not always rely on video alteration or the use of advanced techniques. In many cases, the video is real, but it is used in a different context from its original one.

For example, footage of protests that occurred years ago may be re-shared with a caption suggesting it documents current events. Or a video of an incident in one country is published as having occurred in another. In these cases, the deception is not in the image itself, but in the accompanying information.

The (4D) Model for Verifying Videos Before Resharing

Ask yourself these four questions before you hit "Share":

- Date

Does the video's date of publication match the event it claims to document?

You can use the YouTube DataViewer tool to extract the video's metadata, and then compare it with the alleged time of the event. You can also check the shadows or the sun's position using chronolocation tools to ensure the date is consistent with the context.

- Description (Reverse Search)

Has this video been published before? Search for clips from it using reverse search tools.

Extract key frames from the video using tools like InVID, then conduct a reverse search via Google Lens, Yandex, or TinEye to find the original source and context. Focus on distinct points such as faces or buildings to avoid false results, and check the first comments to learn about previous usage. This reveals 24% of visual disinformation resulting from reuse.

- Distance (Location)

Can the filming location be determined through buildings, signs, or roads?

Look for indicators such as buildings, signs, or roads using Google Earth or WikiMapia to compare with the frames. Use the sun and shadows to determine direction and time, with tools like GeoNames for coordinates, and confirmation via satellite images. This technique is effective in protests or disasters where locations are crucial.

- Details

Do the clothing, weather, or language align with the mentioned context?

Listen to the audio for accents or keywords that do not match the context, and compare the weather or clothing with historical data. Check the shadows and lighting for confirmation of temporal and spatial alignment, while avoiding reliance on dialogue alone. This step completes the verification to confirm full authenticity.

Example..In June 2025, a video of an airstrike circulated on social media, claiming to document a recent attack within a regional conflict. Within hours, the video achieved over two million views and was widely reshared. However, upon verification, it was found that the clip dated back to 2003, was filmed in Baghdad during the Iraq War, and had been broadcast by an international news network at that time. Reverse searching and analyzing the shadows and visible landmarks in the video helped uncover its original location, and the accent used in the audio showed it was not related to the current event. Despite this, the video continued to be widely circulated before it was corrected.

Why Do Students Fail to Detect Misleading Videos?

Individuals suffer from "cognitive biases" such as the "Illusory Truth Effect," where repeatedly presented content (especially visual content) becomes more credible regardless of its accuracy, as the brain tends to interpret repetition as an implicit indicator of validity. This effect is reinforced by what is known as "visual realism", where images and videos are received as a direct representation of reality, which may reduce the propensity for verification. In this context, "Generation Z" is sometimes referred to as one of the groups most engaged in the consumption of digital and visual content. This does not necessarily mean they are more susceptible to disinformation, but the intensity of exposure may play a role in amplifying the effect of these biases.

What about AI-Generated Videos?

Despite increasing interest in deepfake technologies, verification experiences indicate that some misleading videos circulated during crises are not fully generated, but may be real clips that have been reused out of their original context, or manipulated in various ways.

In the next article, we will review the second part of this guide, which focuses on how to detect AI-generated videos, and the most prominent tools and indicators that can help university students, journalists, and researchers distinguish them from real or repurposed videos.

In conclusion, in a digital environment where information spreads faster than our ability to verify it, the role of confronting disinformation is not limited to journalists or fact-checking platforms; it extends to every user who shares content via social media.

Before resharing any video, asking four simple questions about its date, source, location, and details can prevent the spread of misleading information that could contribute to amplifying or fueling a crisis.

Verification does not always require complex tools; it begins with a simple step: pausing for a moment before pressing the "Share" button.